Keeping Up with Claude Code

Last week's Claude Code updates for newbs.

For the record, I'm not calling anyone a newb. If you clicked, you self-identified. This is a digest of last week's top Claude code updates, based on how much they will impact the typical user's day-to-day experience. The selection has been whittled down by Claude, and explained by me. These are the top five out of last week's 138 changelog updates, because, to my everlasting disappointment, I'm not Cady Heron, and the limit does exist.

As I've been writing this, Anthropic has pushed 61 new fixes for today. We are not going to keep up in real time. I'm not certain this will be a recurring series since it was pretty time-consuming, but I did learn a lot. Throughout the rest of this post, all mentions of Claude will refer to Claude Code, as opposed to other features, such as Cowork or Design.

Top releases (mostly) by impact

Default effort for Pro/Max subscribers on Opus 4.6 and Sonnet 4.6 is now high (was medium)

2.1.117 April 22, 2026

This improves the output quality for most paying users. However, it comes at a cost. Higher effort means longer processing (I'm not prepared to call it thinking), and more detailed responses, which require more tokens. Per the Claude API Docs glossary, tokens are "the smallest individual units of a language model, and can correspond to words, subwords, characters, or even bytes (in the case of Unicode). For Claude, a token approximately represents 3.5 English characters..." Users on metered subscriptions will hit their token limits more quickly, and API-users will have more expensive requests. Users will have to play around with it to see what level meets their needs. Users can revert to medium effort level during a session by typing /effort , or change it at a user or project level.

- User level: In

~/.claude/settings.jsonadd"effort": "medium"(or"low"/"high") - Project level: Add it in

.claude/settings.json

Fixed Opus 4.7 sessions showing inflated /context percentages and autocompacting too early — Claude Code was computing against a 200K context window instead of Opus 4.7’s native 1M

2.1.117 April 22, 2026

In the same way context improves collaboration between humans, context from earlier in a session improves Claude's response to each prompt. Claude assembles context by sending the entire conversation (system prompt, tool definitions, memory files, and all prior messages) to the API on every turn. A "turn" is the round-trip travel of the user's prompt to the server, and Claude's response, including any tool calls and their results, back to the terminal. Honestly, real brains are a miracle. This is exhausting. Each turn contains more text as the conversation history becomes longer, using more tokens, which basically represent more resources used per turn. To limit resource expenditure, once the conversation hits 83% of the total number of tokens Claude can send to the server in one turn (the context window), Claude starts sending a summary of the conversation, instead of the full conversation, as context.

Before April 22, Opus 4.7 sessions were calculating context percentages based on a 200k-token context window, instead of Opus 4.7's actual context window, which is 1M tokens. So, when the context sent in a turn reached 166,000 tokens, or 83% of 200k tokens, Claude was sending summarized context, which isn't inherently bad, but could prematurely degrade the quality of the context, and Claude's output. Since the fix, Opus 4.7 begins auto-compacting context when it hits 830k tokens.

/resume on large sessions is significantly faster (up to 67% on 40MB+ sessions) and handles sessions with many dead-fork entries more efficiently

2.1.116 April 20, 2026

I don't know how exciting this is, but I didn't know what a dead-fork entry is, so it made the list. In a Claude Code session, users can type /fork to take it in a different direction, like changing the subject in a conversation. /rewind will go back to an earlier point in the session, which abandons everything after that point. These actions create branching points in the session transcript, with the live conversation being one path through the tree, and the abandoned branches becoming dead-fork entries. If a user ends a session and then types /resume , Claude has to review the entire conversation to determine where it had left off. This was taking a long time with large sessions. The fix to /fork writes a summary of the parent conversation for each fork, instead of writing a full copy, so there is less data to review when a session is resumed.

Auto mode: include "$defaults" in autoMode.allow, autoMode.soft_deny, or autoMode.environment to add custom rules alongside the built-in list instead of replacing it

2.1.118 April 23, 2026

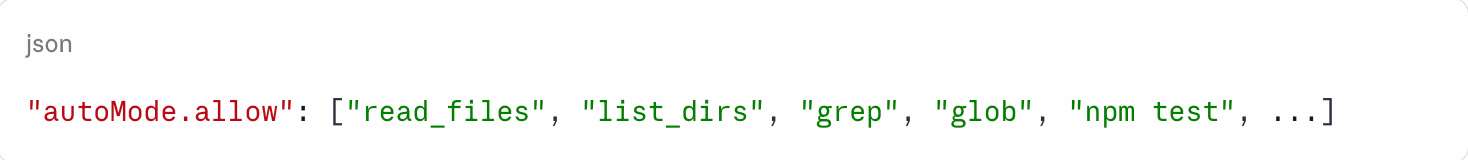

Users can run Claude Code in auto mode, which lets Claude run commands without asking for permission every time. It has built-in rules, called $defaults, which define what it can do automatically, and what it should ask permission for. Prior to this change, if a user wanted to add a rule to autoMode.allow, such as "allow npm test", the custom autoMode.allow would replace the defaults, which are finely tuned and quite helpful. A user would have to know and list every default rule they wanted to keep, plus their customizations.

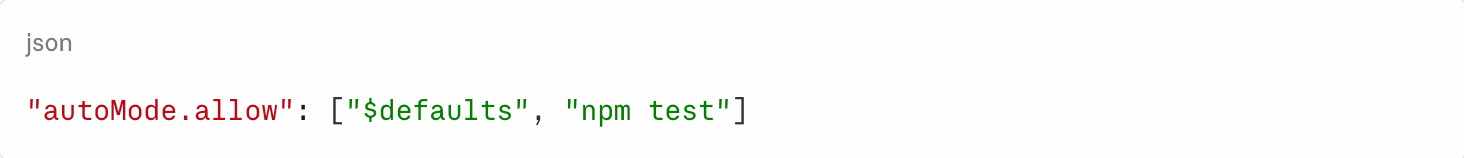

Now, a user can enter $defaults as an item in the list, and it will auto-expand to all the built-in rules.

Before:

Now, the user can just write:

Fixed scrolling up in fullscreen mode snapping back to the bottom every time a tool finishes

2.1.119 April 23, 2026

This is similar to text messages conversations on an iPhone. If you've ever tried to look up something from earlier in a conversation, and the other participant starts typing, the UI automatically scrolls back to the most recent message. Claude's not doing this anymore in fullscreen mode. It's weird that Claude is the tool and the conversation partner. I hope we don't all go crazy.